What is MCP in AI? This question is increasingly important as artificial intelligence grows more capable and interconnected. The Model Context Protocol (MCP) is rapidly emerging as the universal standard for connecting AI models to external tools, databases, and services. In this comprehensive guide, we’ll demystify MCP: what it is, how it works, why it matters, and how it’s transforming AI integration. Whether you’re a developer, business leader, or simply AI-curious, you’ll learn how MCP enables secure, efficient, and future-proof connections across the AI landscape.

Table of Contents

- MCP in AI: An Overview

- Origins and Evolution of Model Context Protocol

- How MCP Differs from Traditional AI Integration

- MCP Architecture: Hosts, Clients, and Servers

- Core Protocol Mechanics: Tools, Resources, and Prompts

- Standardizing AI Integrations with MCP

- Security and Privacy in MCP

- Real-World Use Cases of MCP

- Comparing MCP and APIs: Efficiency, Cost, and Scale

- Measuring ROI: Business Impact of MCP

- Emerging Trends and Future Directions for MCP

- Challenges in Adopting MCP

- Clarifying MCP vs. Other AI Acronyms

- Frequently Asked Questions

- Conclusion: Why MCP Matters for the Future of AI

- References

MCP in AI: An Overview

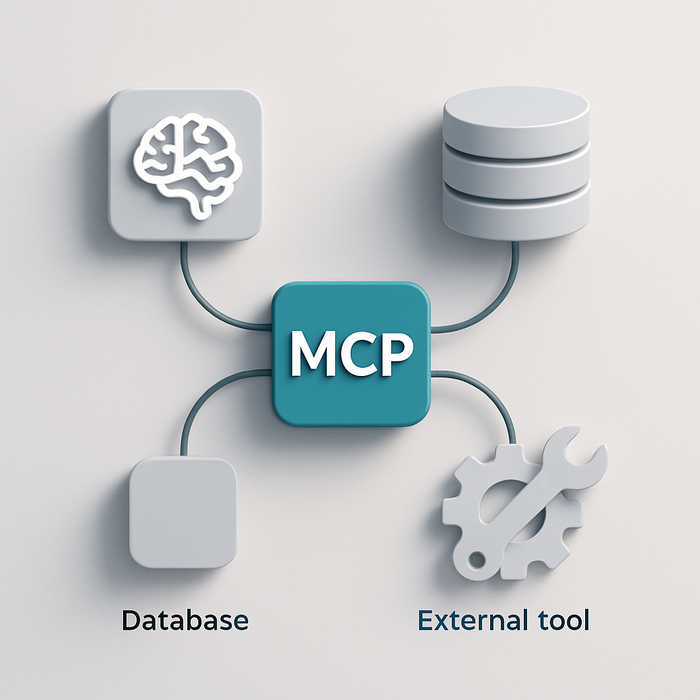

Model Context Protocol (MCP) is an open, universal standard for integrating artificial intelligence models with external tools, data sources, and workflows. Developed by Anthropic and embraced by leading cloud providers like Google Cloud and Microsoft Azure, MCP enables AI systems to communicate with a diverse array of resources using a standardized protocol. Think of MCP as the “USB-C for AI”—a single connector that replaces a web of custom, one-off integrations.

By enabling real-time data access, function execution, and secure interoperability, MCP is laying the foundation for truly connected, autonomous AI agents. It’s the backbone for the next generation of intelligent applications, from supply chain optimization to finance, healthcare, and beyond.

Origins and Evolution of Model Context Protocol

MCP was conceived to address the proliferation of fragmented, costly integrations that hampered AI adoption. Launched in late 2024 by Anthropic, the protocol quickly gained traction as enterprises and cloud vendors sought a more scalable approach to AI tool integration protocol. MCP is now governed by a working group including Microsoft, Google, and Anthropic, with a roadmap focused on greater security, edge computing, and backwards compatibility. The protocol continues to evolve, shaping how AI applications connect and collaborate across the digital ecosystem.

How MCP Differs from Traditional AI Integration

MCP vs. Custom Connectors

Historically, connecting AI models to external tools required building custom connectors—a time-consuming, error-prone process. Each new tool or data source needed its own interface, leading to the “M×N problem” (where M is the number of models and N is the number of systems to integrate). With MCP, this is transformed into an “M+N” model: each AI host builds a single MCP client, and each tool exposes a standardized MCP server. This reduces development time, minimizes maintenance, and accelerates deployment across diverse contexts.

Benefits Over Traditional APIs

MCP does not simply replace APIs—it standardizes how AI discovers, queries, and authenticates against external resources. This shift means lower error rates, faster response times, and seamless scalability as AI applications grow more complex.

MCP Architecture: Hosts, Clients, and Servers

Client-Server Framework

The heart of MCP is its flexible MCP server architecture:

- Hosts: AI models or applications (e.g., Claude, ChatGPT) that need to interact with external systems.

- Clients: Embedded in the host, these components handle communication with MCP servers.

- Servers: Standalone or service-based APIs that expose tools, data (resources), and prompts to the AI hosts.

This separation allows a single AI host to simultaneously access multiple MCP servers—each representing a separate system, dataset, or tool—without bespoke integration work. For example, an AI assistant could book a flight, check inventory, and retrieve user profiles by connecting to three different MCP servers in parallel.

Unique Insight

What sets MCP apart is its modularity: it enables service providers to update or swap out tools on their end without breaking AI application compatibility. This “plug-and-play” approach is a step-change from rigid, hardcoded integrations.

Core Protocol Mechanics: Tools, Resources, and Prompts

MCP leverages the JSON-RPC 2.0 protocol to define three main primitives:

- Tools: Functions the AI can call—such as querying a database or executing a business workflow.

- Resources: Data structures providing context (e.g., product catalog, user records) without triggering side effects.

- Prompts: Structured templates or workflows guiding AI behavior during complex or sensitive operations.

For instance, in a customer support scenario, an AI host might use a tool to retrieve real-time order status, reference the resource list of customer details, and follow a prompt to ensure responses meet compliance standards. This abstraction dramatically simplifies ai tool integration protocol and ai function orchestration across diverse environments.

Standardizing AI Integrations with MCP

MCP addresses the root cause of integration friction: lack of a universal standard. By enforcing schema normalization, automating tool discovery, and unifying authentication protocols, MCP enables standardizing ai integrations at scale. Developers can expose new capabilities via a single MCP endpoint, and AI hosts can instantly connect—no hand-crafted code or weeks-long onboarding required.

This is especially powerful for organizations with complex IT landscapes spanning cloud, on-premises, and third-party services. MCP acts as middleware, bridging old and new systems under a common integration layer.

Security and Privacy in MCP

Granular Data Controls and User Consent

Security is baked into MCP’s DNA. All data exchanges and tool executions require explicit user consent, and sensitive information can be masked before reaching the AI model. MCP employs unified OAuth flows and credential vaults to safeguard access, while execution sandboxes prevent malicious or accidental misuse of connected tools.

Privacy and Regulatory Compliance

For sectors handling regulated data (healthcare, finance, etc.), MCP supports granular audit trails and policy enforcement, making it easier to achieve compliance without compromising on interoperability. This approach to secure ai data sharing is helping organizations embrace AI with confidence.

Real-World Use Cases of MCP

- Supply Chain Optimization: DHL integrated AI with SAP and IoT data via MCP, achieving 37% fewer bottlenecks and over 98% delivery accuracy.

- Financial Services: JPMorgan Chase leverages MCP for live compliance checks and fraud detection, reducing false positives by 29%.

These examples underscore the value of ai application interoperability and real-time ai data access made possible by MCP.

Unique Perspective

Beyond large enterprises, MCP is also being piloted in startups and SMEs, democratizing powerful AI-driven automation even for organizations without massive IT budgets.

Comparing MCP and APIs: Efficiency, Cost, and Scale

Traditional API-based integrations often mean hundreds of hours per connector, high error rates, and scattered authentication methods. MCP reduces connector build time to ~20 hours, drops error rates significantly, and unifies security. The difference is not just cost—it’s operational agility: businesses can deploy new AI-powered capabilities in days, not months.

For cloud-native organizations, MCP unlocks ai cloud integration with minimal friction, while on-premises adopters benefit from a standardized way to expose legacy systems to modern AI.

Measuring ROI: Business Impact of MCP

MCP’s impact is measurable in reduced integration costs, faster time to market, and improved service levels. For example, companies report latency reductions from 800-1200 ms (custom APIs) to 200-400 ms (MCP), and more accurate data exchange. These performance improvements translate to tangible business value—higher customer satisfaction, lower operational risk, and increased innovation throughput.

Emerging Trends and Future Directions for MCP

The MCP working group is advancing the protocol to include support for post-quantum encryption and lightweight deployments on edge devices, expanding its reach to IoT and highly secure environments. Semantic versioning is also on the horizon, promising smoother upgrades and long-term stability.

Unique Insight: As AI agents evolve, MCP could serve as the substrate for multi-agent systems—where many autonomous AIs coordinate across a web of shared tools and data, unleashing new business models and collaborative possibilities.

Challenges in Adopting MCP

- Legacy System Integration: Older, on-premises systems may lack APIs, requiring additional middleware or custom adapters.

- Skills Gap: Many enterprises cite a lack of internal MCP expertise as a barrier. Upskilling and access to training resources are key.

- Initial Cost: Early adoption may involve up-front investment ($50k–$200k) for platform, middleware, and staff training—though ROI is typically high in the long run.

Despite these challenges, the trend toward standardized, open AI connectivity is clear. Market momentum and growing ecosystem support are steadily reducing these barriers.

Clarifying MCP vs. Other AI Acronyms

It’s important to distinguish Model Context Protocol from the McCulloch-Pitts neuron model (also abbreviated MCP), which refers to an early, unrelated neural network concept. Within the context of modern AI integration, MCP exclusively refers to the protocol standardizing connections between models and external systems.

Frequently Asked Questions

- What does MCP in AI stand for?

- MCP stands for Model Context Protocol, an open standard enabling AI models to securely connect with tools, data, and workflows.

- How is MCP different from traditional APIs?

- Unlike traditional APIs that require bespoke integrations for each new tool, MCP provides a universal connector that standardizes discovery, authentication, and data exchange.

- Who supports and maintains the MCP standard?

- The MCP protocol is maintained by a working group including Anthropic, Microsoft, and Google, ensuring continuous evolution and community input.

- Is MCP secure enough for sensitive industries?

- Yes. MCP includes granular data controls, user consent mechanisms, and advanced authentication to meet the requirements of regulated industries like finance and healthcare.

- What are the main adoption challenges with MCP?

- Key challenges include integrating legacy systems, addressing the skills gap, and managing initial deployment costs. However, these are steadily being addressed as the ecosystem matures.

Conclusion: Why MCP Matters for the Future of AI

The rise of the Model Context Protocol marks a turning point in the evolution of AI—from isolated, static models to dynamic, interconnected agents. By solving the integration bottleneck, MCP empowers organizations to rapidly deploy, scale, and innovate with AI. Its open, secure, and developer-friendly approach is already driving success in logistics, finance, and beyond. As MCP adoption spreads and the protocol matures, expect to see new classes of AI-enabled applications and more agile, intelligent enterprises. The future of AI is not just smarter—it’s more connected.

Ready to explore how MCP could transform your AI projects? Share your thoughts below, and let us know which integration challenges you’d like to see solved with standardized AI connectivity.